|

I am a 5th-year M.S. student in Computer Science at UC Berkeley in the Berkeley Artificial Intelligence Research (BAIR) Lab, advised by Professor Sergey Levine. I previously completed my B.A. in Computer Science and Physics, where I worked with Professors Michael Crommie and Alex Zettl in the Physics Department. My research focuses on training LLMs to engage in reliable, coherent, and value-aligned free-form dialogue. I am particularly interested in advancing the foundations of LLM Agents—how they reason, converse, adapt over multi-turn interactions, and maintain consistent behavior over time. My work aims to improve both the capabilities and safety of dialogue agents deployed in open-ended, real-world settings through multi-turn Reinforcement Learning. Current research interests include:

|

|

Preprints

|

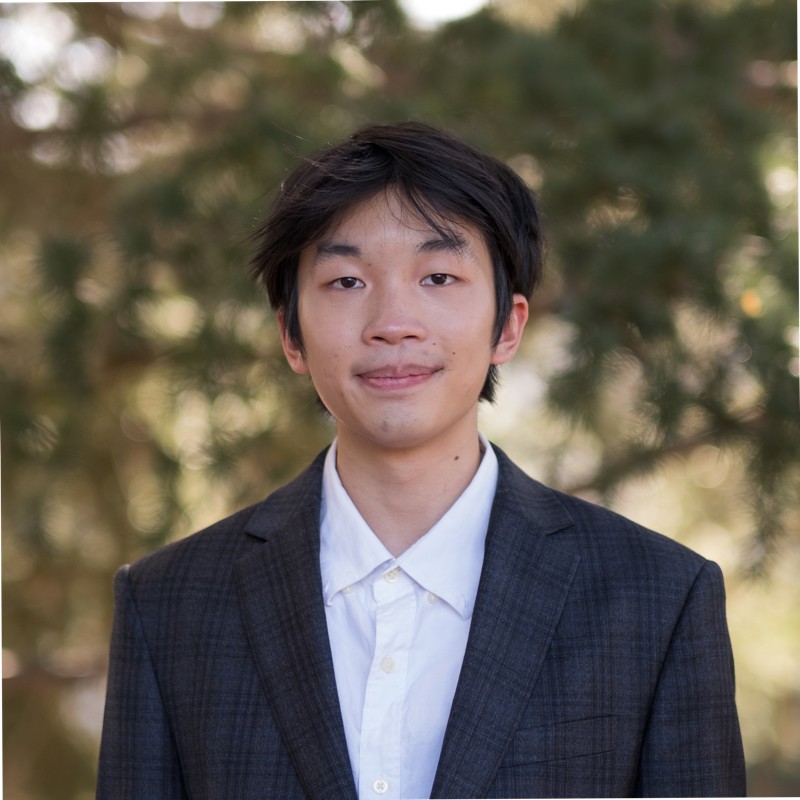

Marwa Abdulhai, Ryan Cheng, Aryansh Shrivastava, Aviral Kumar, Sergey Levine. Scaling LLM Post-training (SPOT) Workshop, ICLR 2026 Lifelong Learning Agents (LLA) Workshop, ICLR 2026 Data for Foundation Models (DATA-FM) Workshop, ICLR 2026 In review at a ML Conference Paper Large language models (LLMs) fine-tuned with reinforcement learning from human feedback (RLHF) are optimized for cooperative instruction following, which makes them poorly suited for strategic decision-making in long-horizon dialogue. To test the ability of LLMs to engage in strategic dialogue, we introduce StrategicBench, a benchmark of 30 negotiation tasks inspired by Harvard Program on Negotiation materials. Our benchmark reveals that while reasoning-oriented LLMs outperform instruction-tuned models, they fail to revise their strategy in response to opponent behavior. To address this limitation, we introduce Hierarchical Agenda Reasoning (HAR), a hierarchical reasoning framework that that explicitly separates what an agent seeks to achieve from how it acts in multi-turn dialogue. HAR structures generation around persistent goal representations that guide the selection and revision of tactics across turns, enabling agents to backtrack from failed strategies without abandoning larger objectives. We find that HAR outperforms instruction-tuned and prompting-based approaches, achieving higher agreement rates, better outcomes, and improved conversation quality in human evaluations. Lastly, multi-turn RL fine-tuning with HAR agents leads to generalization of negotiation performance across unseen tasks and opponent personalities. |

|

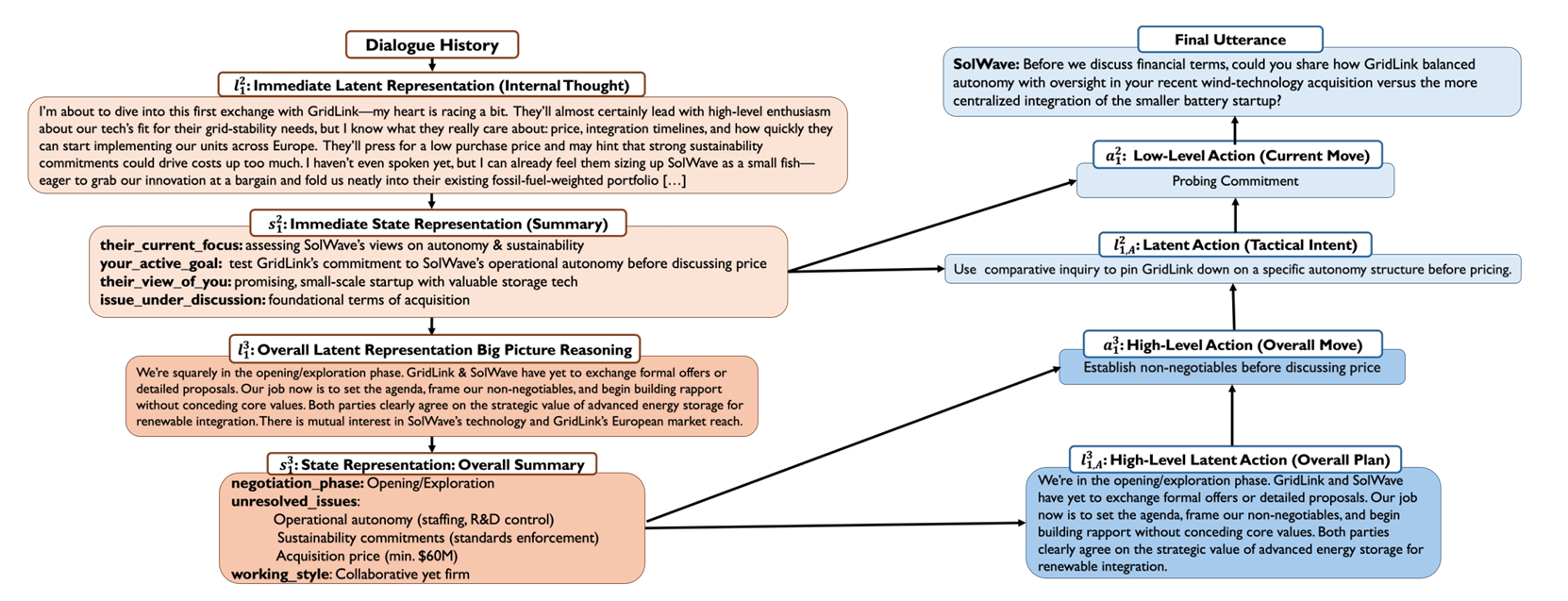

Marwa Abdulhai, Ryan Cheng, Aryansh Shrivastava, Natasha Jaques, Yarin Gal, Sergey Levine. Trustworthy AI Workshop, ICLR 2026 In review at a ML Conference Paper / Code / Website Large Language Models (LLMs) interact with hundreds of millions of people worldwide, powering applications such as customer support, education and healthcare. However, their ability to produce deceptive outputs, whether intentionally or inadvertently, poses significant safety concerns. In this paper, we systematically investigate the extent to which LLMs engage in deception within dialogue. We benchmark 8 state-of-the-art models on 4 dialogue tasks, showing that LLMs naturally exhibit deceptive behavior in approximately 26% of dialogue turns, even when prompted with seemingly benign objectives Unexpectedly, models trained with RLHF, the predominant approach for ensuring the safety of widely-deployed LLMs, still exhibit deception at a rate of 43% on average. Given that deception in dialogue is a behavior that develops over an interaction history, its effective evaluation and mitigation necessitates moving beyond single-utterance analyses. We introduce a multi-turn reinforcement learning methodology to fine-tune LLMs to reduce deceptive behaviors, leading to a 77.6% reduction compared to other instruction-tuned models. |

Publications

|

Marwa Abdulhai, Ryan Cheng, Donovan Clay, Tim Althoff, Sergey Levine, Natasha Jaques. NeurIPS 2025 Paper / Code / Website Large Language Models (LLMs) are increasingly used to simulate human users in interactive settings such as therapy, education, and social role-play. While these simulations enable scalable training and evaluation of AI agents, off-the-shelf LLMs often drift from their assigned personas, contradict earlier statements, or abandon role-appropriate behavior. We introduce a unified framework for evaluating and improving consistency in LLM-generated dialogue, reducing inconsistency by over 55%, resulting in more coherent, faithful, and trustworthy simulated users. |

Projects

|

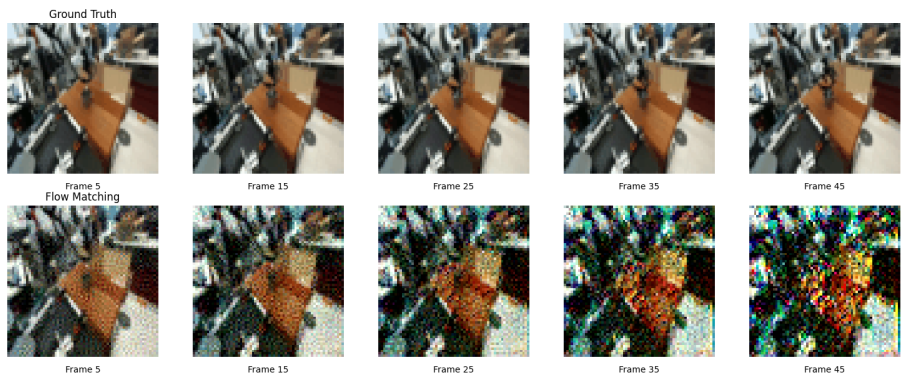

Anirudh Pai, Ryan Cheng, Junhua Ma, CS 280 Graduate Computer Vision Final Project, Spring 2025 We investigate the task of action-conditioned visual prediction in robotic manipulation: given a sequence of past RGB frames and associated low-level robot actions, can we predict the robot’s future visual observations? This capability is crucial for model-based planning and sim-to-real transfer, enabling agents to reason over future states without environment interaction. Using the large-scale DROID dataset, we propose a generative flow matching model model that predicts future frames from a wrist-mounted camera viewpoint, conditioned on prior frames and jointlevel actions. To urge the model to learn actions—rather than simply reproducing the last ground-truth frame in the context horizon—we explore several training strategies and evaluate their effectiveness through visual assessments of stability during auto-regressive rollout. In particular, we find that cascading UNets during training significantly improves inference-time stability. These findings highlight that modeling action-conditioned dynamics in the visual domain is feasible even in complex manipulation settings, paving the way for its integration into planning pipelines. |

|

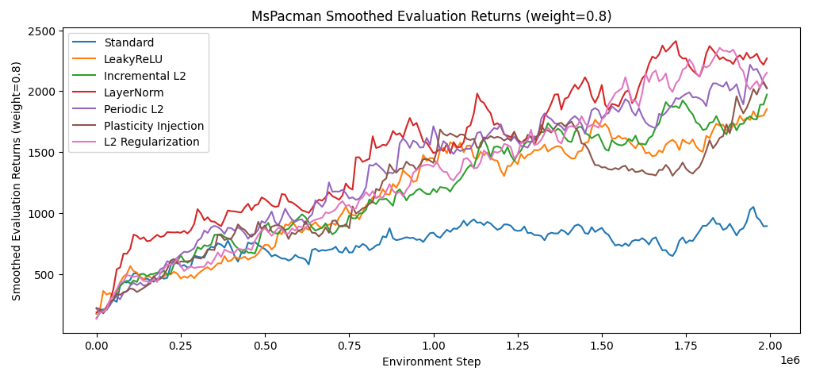

Ryan Cheng, Ethan Chen, Leon Ma, CS 285 Reinforcement Learning, Decision Making, Control Final Project, Fall 2023 We apply various strategies to maintain network plasticity on a standard online implementation of DQN on the Atari MsPacman environment, as well as on the implementation of offline-to-online fine-tuning for IQL learning on the Pointmass Hard environment. We evaluate two metrics that have been proposed to correlate with plasticity, (Dohare et al., 2023) the average effective rank (Roy & Vetterli, 2007) and the weight magnitude of the layers of the network, as well as a metric that we posit would also correlate with plasticity: the percentage of units that are updated for any given batch (Lyle et al., 2023). By studying these metrics we hoped to better understand plasticity from a statistical point of view. |

|

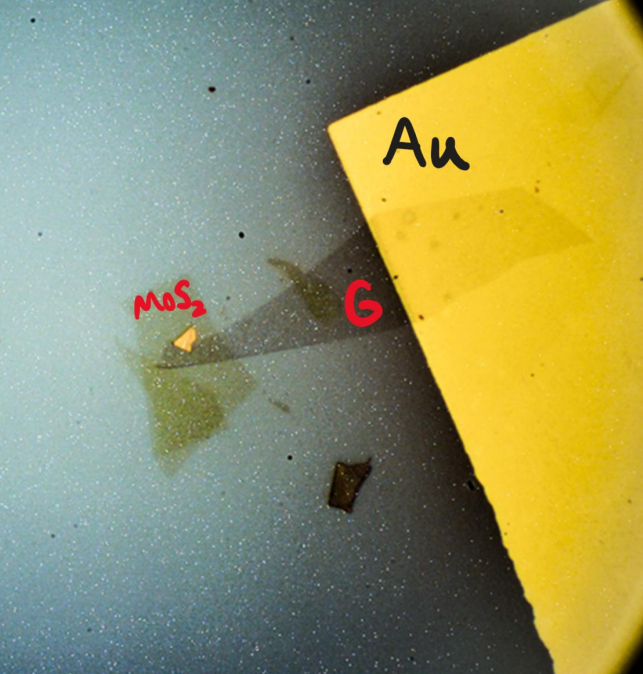

Ryan Cheng, Salman Kahn, Michael Crommie, Alex Zettl. Poster at Berkeley Physics Undergraduate Research Symposium, Spring 2024 Investigated the intercalation of lithium into twisted transition metal dichalcogenides using Raman Spectroscopy and Atomic Force Microscopy, with applications in energy storage and battery technology. We fabricate a t-MoS₂ device on a lithium-ion conducting glass ceramic (LICGC) and observe how lithium intercalates through a moiré lattice (generated by the twist between the MoS₂ layers) when a vertical electric field is applied. |

|

Ryan Cheng Fall 2024 Link Linked are the projects that I've done for CS 180 (Computer Vision) in Fall 2024. These include implementing my own diffusion and flow matching models, image warping and mosaicing through Homographies, Adaptive Non-Maximal Suppression, feature extraction and matching, and RANSAC, and Mixed Gradient Blending, Laplacian Blending, and Poisson Blending. |